Software development teams are constantly under pressure to deliver high-quality software faster than ever before. Balancing speed with quality and efficiency can be a significant challenge. This article provides practical strategies to help development teams streamline their workflows, optimize their processes, and achieve faster and more efficient software delivery. Get practical tips to accelerate your software today!

Software delivery models

Efficient software delivery starts with selecting the right delivery model. Each model provides a structured approach to managing projects, ensuring smoother transitions from development to deployment.

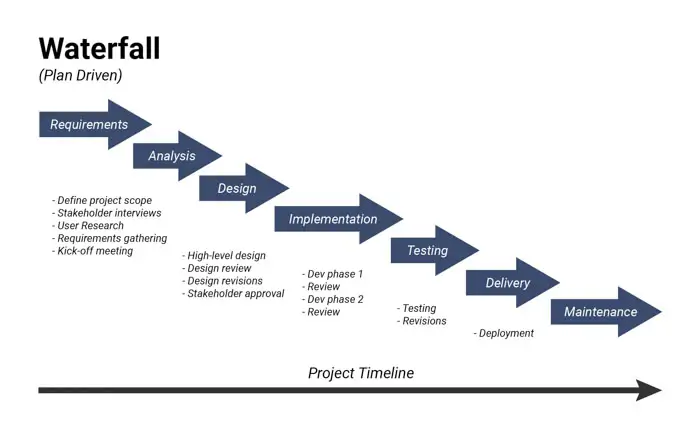

Waterfall delivery

Waterfall delivery follows a sequential design process, where each phase is completed before moving to the next. This strict adherence to sequential progression creates a structured, well-documented process, which is ideal for projects with well-defined requirements.

However, the model lacks flexibility for mid-project adjustments. This model is best suited for projects where changes are minimal or undesirable.

Lean delivery

A lean software model is a software development methodology derived from lean manufacturing principles. This model emphasizes maximizing value while minimizing waste, reducing batch sizes, and achieving continuous improvement.

Core principles include building quality, amplifying learning, delaying commitment, rapid delivery, respecting people, and optimizing the entire value stream. By focusing on the essentials, this model ensures that teams deliver high-priority features efficiently.

Agile delivery

Agile delivery is an iterative and incremental approach, emphasizing collaboration, flexibility, and rapid response to change. Teams work in short cycles called sprints, delivering working software increments frequently to gather continuous stakeholder feedback and make necessary adjustments.

This iterative development and focus on frequent delivery ensure faster time-to-market, align with evolving client needs, and maximize business value, making Agile a preferred choice for modern software implementation.

DevOps

DevOps is a set of practices, cultural philosophies, and tools that aim to automate and integrate the processes between software development (Dev) and IT operations (Ops). It emphasizes collaboration, communication, and automation throughout the entire software release lifecycle, from development and testing to deployment and operations.

Key practices include continuous integration (CI), continuous delivery (CD), infrastructure as code, and continuous monitoring, enabling organizations to deliver software faster, more reliably, and with greater agility.

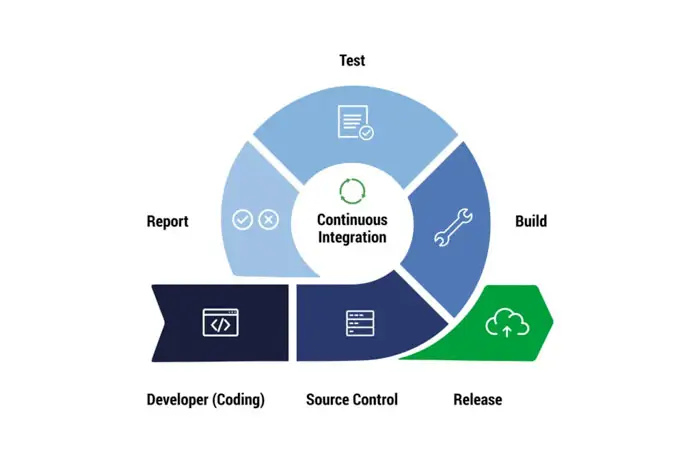

Continuous integration (CI)

Continuous Integration (CI) is a software development practice where developers regularly merge their code changes into a central repository, typically multiple times a day.

This frequent integration is automated through build and test processes, designed to detect integration issues early in the development lifecycle and ensure that the main codebase remains in a stable and working state.

Continuous delivery (CD)

Continuous Delivery (CD) is a software development practice that automates the release process, enabling frequent and reliable deployments of software changes to various environments, including production.

It builds upon Continuous Integration (CI) by automating the steps required to prepare and deploy software after it has been integrated and tested. While Continuous Delivery ensures that software is always in a deployable state, the final deployment to production may be a manual trigger.

Key principles on building a strong software delivery foundation

A robust software delivery process relies on fundamental principles that ensure efficiency, transparency, and quality.

Transparency & visibility

Transparency and visibility are pivotal in maintaining clear communication and collaboration among team members and stakeholders.

These principles ensure that the software implementation process is fully transparent, allowing all parties to monitor progress, identify potential bottlenecks, and make timely, informed decisions.

Tools such as real-time dashboards and status reports enhance visibility, keeping everyone aligned with project goals and timelines.

Predictable releases

Balancing frequent software updates with predictable release schedules is essential for successful software implementation. Frequent releases enable rapid responses to evolving requirements, while predictable outcomes ensure stability and reliability. This principle fosters agility in the development cycle without compromising the consistency and quality of deliverables.

Quality assurance & testing

Quality assurance (QA) and testing are cornerstones of software deployment, ensuring the final product meets functional, performance, and security standards.

QA involves rigorous testing practices, including unit testing, integration testing, and user acceptance testing, to identify and resolve defects early in the development process. By embedding robust QA mechanisms, organizations can reduce risks and enhance software reliability.

Feedback & improvement

Continuous feedback and iterative enhancement drive the optimization of implementing software. Regularly gathering input from users, developers, and operational teams helps identify inefficiencies and areas for improvement.

Implementing feedback loops and retrospectives allows teams to refine processes, adapt to changing needs, and maintain an efficient delivery pipeline over time.

Software delivery best practices

The software delivery pipeline is a structured sequence of stages that guide software from development to production. Each stage ensures collaboration, quality, and efficiency, culminating in a reliable product for end-users. Below are the essential stages of a well-optimized delivery pipeline:

Streamlined code commit

The pipeline begins with code commits, where developers contribute their changes to a shared repository using version control systems like Git.

This process enables collaboration, tracks changes, and maintains version history. By organizing the codebase efficiently, teams can work simultaneously on different features while minimizing conflicts.

Automated builds

In the build stage, the committed code is compiled and packaged into executable artifacts, such as binaries or libraries. This stage transforms the source code into deployable units, ensuring compatibility and readiness for subsequent testing. Automated build tools streamline this process, providing consistency and reliability.

Rigorous testing

Testing is a critical stage where automated test suites verify the software's functionality, performance, and security. This includes unit tests to check individual components, integration tests to validate interactions between modules, and acceptance tests to ensure the software meets end-user requirements. Rigorous testing minimizes risks and enhances software quality before deployment.

Efficient deployment

Validated software artifacts are deployed to target environments, such as staging or production. Deployment ensures that the software is accessible to end-users or stakeholders for evaluation.

Automation tools simplify this process, enabling seamless, error-free rollouts across multiple environments.

Proactive monitoring

After deployment, the software is continuously monitored for performance, availability, and security. Key metrics such as response times, error rates, and resource utilization are tracked to detect and address issues proactively. Monitoring ensures the software operates reliably and meets user expectations in real-world conditions.

Conclusion

Improving software delivery speed and efficiency is a multi-faceted endeavor requiring the right methodologies, adherence to foundational principles, and the adoption of best practices. By combining models like Agile and DevOps with automation and real-time monitoring, organizations can ensure seamless delivery of software that meets both business and client expectations.