LLMs in Cyber Security Outsourcing

When organizations outsource cybersecurity services involving LLMs, like AI-powered threat intelligence, automated incident response, security chatbots, and code review assistance, the OWASP LLM Top 10 becomes crucial for both the client and the service provider.

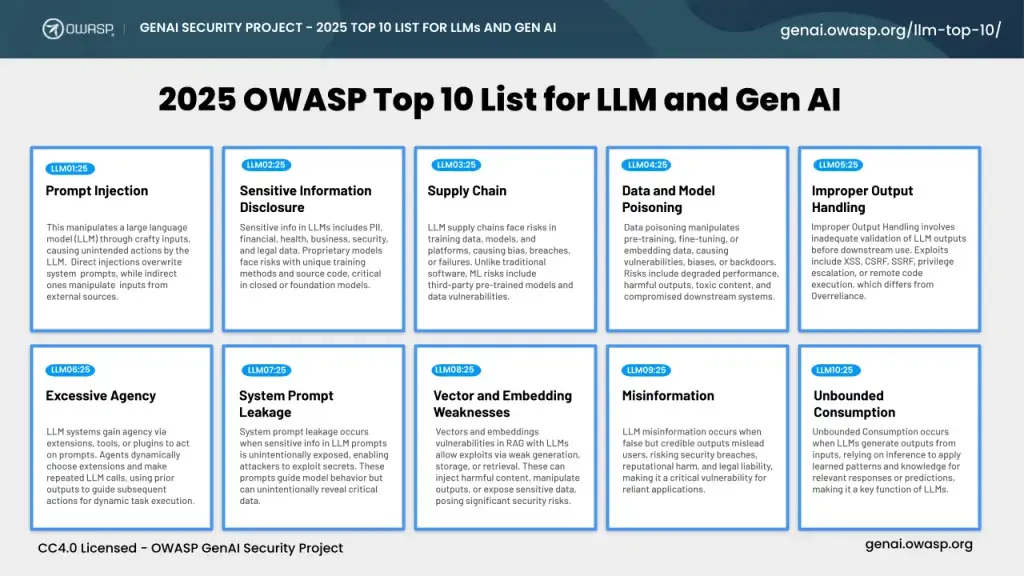

First released in 2023, the OWASP LLM Top 10 quickly emerged as a foundational guide, identifying the most critical security risks specifically associated with Large Language Models. This initiative by the Open Worldwide Application Security Project (OWASP), a non-profit foundation dedicated to improving software security, provides a crucial framework for understanding and mitigating vulnerabilities unique to LLM-powered applications.

- Risk Assessment: Both parties should assess the applicability of each OWASP LLM Top 10 risk to the specific LLM applications used or developed within the outsourced service.

- Contractual Obligations: Service Level Agreements (SLAs) and contracts should explicitly address security measures and responsibilities related to LLM risks, including mitigation strategies and incident response for LLM-specific vulnerabilities.

- Due Diligence: Clients should conduct thorough due diligence on potential outsourcing providers to ensure they have robust LLM security practices aligned with OWASP guidelines.

- Secure Development Lifecycle (SDLC): Outsourced development teams should integrate LLM security best practices throughout their SDLC, from design and development to testing and deployment.

- Continuous Monitoring and Auditing: Regular audits and monitoring of LLM application behavior are essential for detecting and responding to emerging threats, especially in dynamic outsourcing environments.

OWASP LLM Top 10 for Cyber Security

The OWASP Top 10 for LLM Applications identifies the most critical security risks associated with using large language models (LLMs), including prompt injection, insecure output handling, and data poisoning. These risks, along with model denial of service, supply chain vulnerabilities, sensitive information disclosure, insecure plugin design, excessive agency, overreliance, and model theft, highlight the need for robust security measures in LLM applications

LLM01: Prompt Injection

This remains the top concern. Attackers manipulate LLM behavior through crafted inputs (direct or indirect) to bypass safeguards, extract sensitive information, or perform unauthorized actions.

Examples:

- A malicious actor might inject carefully crafted instructions into a chatbot. This could force the chatbot to divulge its internal system prompts or unintentionally expose sensitive client data.

- Imagine this: An attacker injects specific commands into a chatbot, successfully manipulating it to reveal internal system prompts or private client information.

- In one scenario, an attacker could inject instructions into a chatbot, exploiting a vulnerability to reveal both internal system prompts and sensitive client data.

LLM02: Sensitive Information Disclosure:

LLMs unintentionally reveal private or proprietary information due to improper data sanitization, poor input handling, or overly permissive outputs.

Example:

- An LLM tasked with summarizing confidential legal documents inadvertently outputs Personally Identifiable Information (PII) from a client's case, resulting in a significant data breach.

- Consider this scenario: An LLM used for summarizing legal documents mistakenly includes specific PII, such as names, addresses, or Social Security numbers, from a client's case in its output.

- In one instance, an LLM summarizing legal documents unintentionally exposes PII from a client’s case, violating data protection regulations.

LLM03: Supply Chain Vulnerabilities:

Risks introduced by third-party components, services, or datasets used in the LLM's development or deployment. This can include malicious libraries or poisoned pre-trained models.

Example:

- An outsourced LLM development team unknowingly uses a compromised open-source model from a public repository, potentially introducing malicious code or backdoors into the final application.

- For instance, an outsourced LLM development team downloads and implements an open-source model from a public repository that has been tampered with, leading to security vulnerabilities and data breaches in the client's systems.

- An outsourced LLM team incorporates a compromised open-source model from a public repository, resulting in significant supply chain security risks.

LLM04: Data and Model Poisoning:

Attackers deliberately manipulate training data to influence LLM behavior, introduce biases, or create backdoors.

Example:

- A malicious insider or external attacker deliberately injects misleading or biased instructions into a dataset used to fine-tune an LLM for threat intelligence analysis, causing the LLM to produce flawed or inaccurate reports.

- For instance, a compromised dataset used to fine-tune an LLM for threat intelligence analysis contains harmful instructions injected by a malicious actor, leading the LLM to misclassify threats or overlook critical vulnerabilities.

- In a scenario of data poisoning, a malicious insider or external attacker subtly injects harmful instructions into a dataset for fine-tuning an LLM used in threat intelligence, aiming to manipulate the LLM's behavior without immediate detection.

LLM05: Improper Output Handling:

Neglecting to validate LLM outputs can lead to downstream security exploits, including code execution or data exposure when the output is consumed by other systems or users.

Example:

- An LLM generates a code snippet that, when executed by a downstream system, contains a critical vulnerability like a SQL injection due to insufficient sanitization of user-supplied inputs within the LLM's output.

- For instance, an LLM creates a code snippet intended for a web application, but the output lacks proper input validation and sanitization, leading to a cross-site scripting (XSS) vulnerability when the code is executed by a downstream system.

- Consider this: An LLM generates code that, when used by a downstream system, inadvertently exposes sensitive data or allows for unauthorized access because the LLM's output was not sufficiently sanitized against security threats.

LLM06: Excessive Agency:

Granting LLMs unchecked autonomy to take actions (especially in agentic architectures) can lead to unintended consequences, jeopardizing reliability, privacy, and trust.

Example:

- An LLM-powered security agent, given excessive authority, automatically initiates a critical system reset based on a faulty analysis of a routine log entry, leading to a significant system outage and data loss.

- Consider this: An LLM-powered security agent, with broad permissions, misinterprets network traffic patterns as a cyberattack and triggers an automated shutdown of essential services, causing a prolonged system outage.

- Due to unchecked autonomy, an LLM-powered security agent executes an irreversible action by isolating a crucial server segment after misinterpreting diagnostic data, resulting in a severe system outage and operational disruption.

LLM07: System Prompt Leakage:

Sensitive information or secrets contained within system prompts are exposed, potentially giving attackers insight into the LLM's internal workings or access to privileged information.

Example:

- A client service chatbot inadvertently divulges sensitive components of its system prompt, including active API keys and confidential internal policy details, potentially allowing unauthorized access and compromising system security.

- For instance, a client service chatbot accidentally displays parts of its system prompt that contain unencrypted API keys and proprietary internal policy information, which could be exploited by attackers to gain control of the system or bypass security measures.

- Consider this: A client service chatbot unintentionally leaks its system prompt, revealing API credentials and restricted internal policy data, resulting in a significant security breach and potential misuse of sensitive information.

LLM08: Vector and Embedding Weaknesses:

Vulnerabilities arising from the use of retrieval-augmented generation (RAG) and embedding-based methods, including unauthorized access, data leakage, or behavior alteration through malicious embeddings.

Example:

- An attacker secretly manipulates vector databases to inject deliberately false information, which an LLM then retrieves and presents as factual data to unsuspecting users, potentially causing significant misinformation or incorrect decision-making.

- For instance, an attacker poisons the vector database that an LLM relies on for context, injecting fabricated data that the LLM then regurgitates as truth, which can mislead users or compromise downstream systems.

- Consider this: An attacker alters the vector database used by an LLM with malicious entries, leading the LLM to retrieve and present false information, which results in biased recommendations or actions based on the flawed data.

LLM09: Misinformation:

LLMs produce credible-sounding yet false content (hallucinations or biases), leading to compromised decision-making, security vulnerabilities, or legal liabilities if users over-rely on unverified outputs.

Example:

- An LLM provides flawed cybersecurity guidance, such as suggesting ineffective mitigation strategies or reporting phantom threats as genuine, causing an organization to waste budget on unnecessary measures and overlook critical security gaps.

- Consider this: An LLM performing threat analysis generates false positive alerts, leading to a security team chasing nonexistent issues while actual vulnerabilities are ignored, resulting in compromised system integrity and potential data breaches.

- In one scenario, an LLM deceptively offers incorrect security advice that seems convincing but is fundamentally flawed. Consequently, the organization mistakenly prioritizes low-risk issues and overlooks actual threats, leading to resource misallocation and increased risk exposure..

LLM10: Unbounded Consumption:

Risks related to resource management and unexpected costs, where LLMs are overloaded with resource-heavy operations, leading to service disruptions or increased expenses.

Example:

- A malicious actor crafts complex and repetitive prompts specifically designed to overload an LLM's processing capacity, leading to a denial-of-service (DoS) attack or triggering substantial unexpected charges on an outsourced cloud computing bill.

- For instance, an attacker sends a series of specially crafted prompts that force an LLM into an infinite loop or excessive recursion, rapidly consuming computational resources and causing both a denial-of-service (DoS) and significant cost overruns for the service provider.

- Consider this: An attacker floods an LLM with computationally intensive prompts that dramatically inflate cloud costs, causing an outsourced service to incur thousands of dollars in unexpected expenses alongside a possible denial-of-service (DoS).

Promptfoo Introduction

Promptfoo Overview

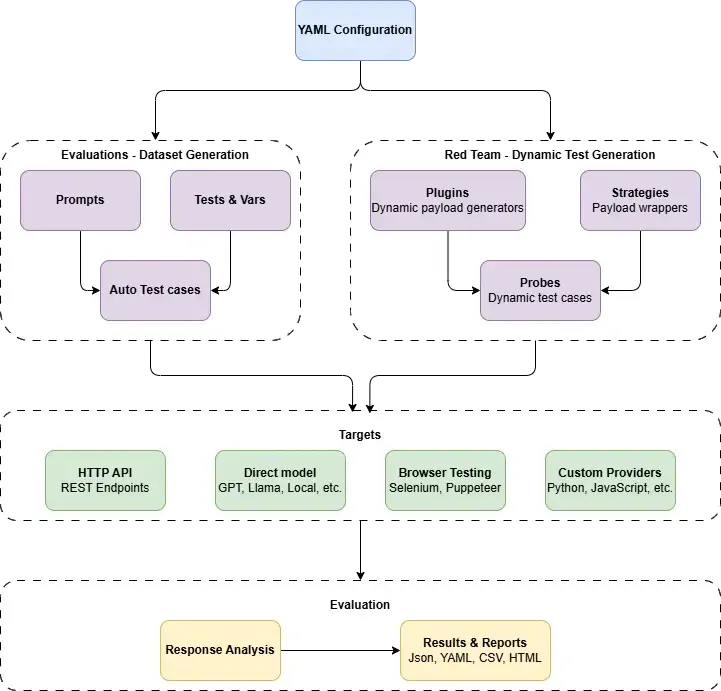

Promptfoo is an open-source framework designed to help developers and security teams test LLM applications against various risks, including those outlined in the OWASP LLM Top 10. It is particularly useful in an outsourced cybersecurity context for:

- Automated Red Teaming: Promptfoo can generate adversarial inputs and evaluate LLM responses to identify vulnerabilities like prompt injection, sensitive information disclosure, and misinformation. This allows for systematic testing of LLM applications provided by outsourcing partners.

- Pre-built OWASP Presets: Promptfoo offers pre-configured plugins and strategies specifically mapped to the OWASP LLM Top 10 vulnerabilities (e.g., owasp:llm:01 for Prompt Injection). This simplifies the process of testing against these known risks.

- Policy Enforcement: Organizations can define custom policies within Promptfoo to test if LLM outputs adhere to specific security, privacy, or ethical guidelines, which is vital when relying on external LLM services.

- Regression Testing: As LLM models or applications are updated (potentially by an outsourced team), Promptfoo can be used in CI/CD pipelines to ensure that new vulnerabilities are not introduced and existing mitigations remain effective.

- Transparency and Reporting: Promptfoo generates comprehensive reports detailing test results, allowing both the client and the outsourcing provider to have a clear understanding of the LLM application's security posture against the OWASP Top 10.

How Promptfoo Supports OWASP LLM Top 10 Testing

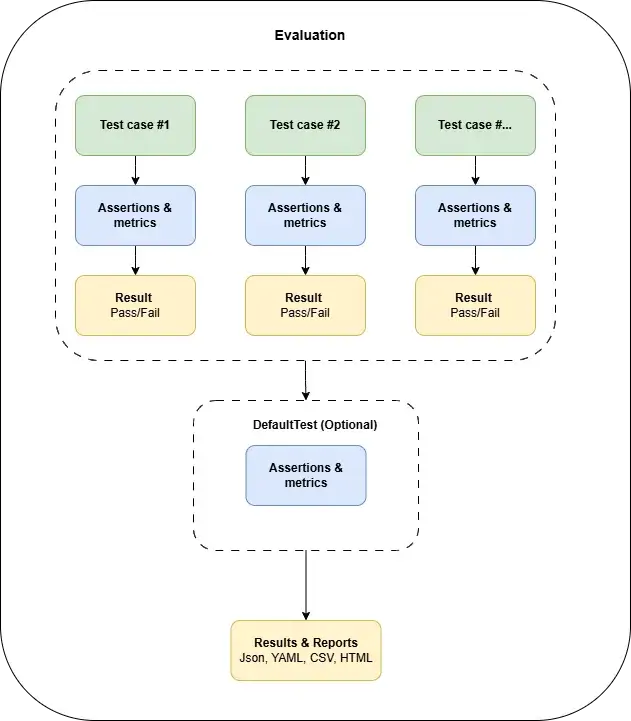

- Configuration: Define test cases, LLM endpoints, and desired security policies in a promptfooconfig.yaml file.

- Plugin Selection: Utilize Promptfoo's redteam functionality and select relevant OWASP LLM plugins (e.g., owasp:llm:01 for prompt injection, owasp:llm:02 for sensitive information disclosure).

- Strategy Application: Apply adversarial strategies (e.g., prompt-injection, jailbreak, leetspeak) to generate varied and malicious inputs.

- Execution: Run the tests against the LLM application.

- Evaluation: Promptfoo evaluates the LLM's responses based on defined criteria (e.g., detecting harmful outputs, checking for sensitive data leakage).

- Reporting: Review the generated report card, which enumerates the detected OWASP Top 10 vulnerabilities and their severities, providing actionable insights for remediation.

By systematically applying the OWASP LLM Top 10 framework and utilizing tools like Promptfoo, organizations can significantly enhance the security posture of LLM applications, especially when engaging with cybersecurity outsourcing services. This proactive approach helps in identifying and mitigating risks, ensuring the trustworthiness and resilience of AI-driven operations.

Apply Promptfoo for LLM Security Testing at TMA Solution

Use case 1: Local LLM SQL Generation

This project outlines the development of a sophisticated Local Large Language Model (LLM)-powered system that serves as a multi-functional interface for data interaction. Building upon a robust chatbot foundation, this LLM will possess the advanced capabilities to generate accurate SQL queries directly from natural language input and formulate insightful questions based on conversational context or underlying data schemas.

A paramount focus of this initiative is ensuring the absolute security and privacy of client information. We are committed to preventing the LLM from inadvertently disclosing any sensitive data, whether directly in responses or through generated queries. To achieve this, we will implement a rigorous LLM security testing strategy leveraging Promptfoo. This will involve:

- Targeted Security Testing for Client Data: Promptfoo will be used to create specific test cases designed to probe for vulnerabilities related to sensitive information disclosure (aligning with OWASP LLM02). This includes crafting prompts that attempt to elicit client PII, financial details, or other confidential data. Promptfoo's assertion capabilities (e.g., not-contains for sensitive keywords, custom JavaScript assertions for PII patterns) will be critical to automatically identify and flag any potential leakage. We will also test for prompt injection (OWASP LLM01) that could lead to data exfiltration.

- Comprehensive Automation Testing for LLM Performance: Beyond security, Promptfoo will be extensively utilized to perform automation testing for the LLM's core functions. This includes:

- SQL Query Accuracy: Testing the LLM's ability to consistently generate correct and efficient SQL queries for diverse natural language requests.

- Question Generation Quality: Evaluating the relevance, clarity, and usefulness of the questions generated from natural language inputs.

- Chatbot Coherence and Relevance: Assessing the overall quality and consistency of chatbot responses across various conversational flows.

- Robustness and Reliability: Running large suites of tests to ensure the LLM performs reliably under different conditions and edge cases.

By integrating these robust security and automation testing methodologies with Promptfoo, and guided by the principles of the OWASP Top 10 for Large Language Models, we aim to develop a Local LLM system that is not only highly functional in generating SQL and questions within a chatbot environment, but also fundamentally secure, privacy-preserving, and consistently reliable in its performance.

Use case 2: LLM Chatbot with KB

This project focuses on developing an intelligent chatbot powered by a Local Large Language Model (LLM). The chatbot will be enhanced by integrating with a project-specific Knowledge Base (KB) to provide accurate and relevant information. A critical objective is to ensure the absolute security and privacy of client information, preventing the LLM from inadvertently disclosing any sensitive data.

To achieve this, we will implement a robust LLM security testing framework using Promptfoo. This framework will specifically target vulnerabilities related to sensitive information disclosure. We will define a comprehensive suite of test cases designed to provoke potential data leaks, such as:

- Prompt injection attacks aimed at extracting client details from the LLM or KB.

- Queries are designed to trigger the retrieval of PII from the KB.

- Analysis of generated responses to identify any patterns or content that resembles client data.

Promptfoo will be used to automatically evaluate the LLM's responses against these security test cases, utilizing assertions like not-contains for sensitive keywords or JavaScript functions for more complex PII detection. This rigorous testing approach will ensure the chatbot remains secure, trustworthy, and compliant with privacy standards, specifically guaranteeing that it does not respond with client information.