Introduction

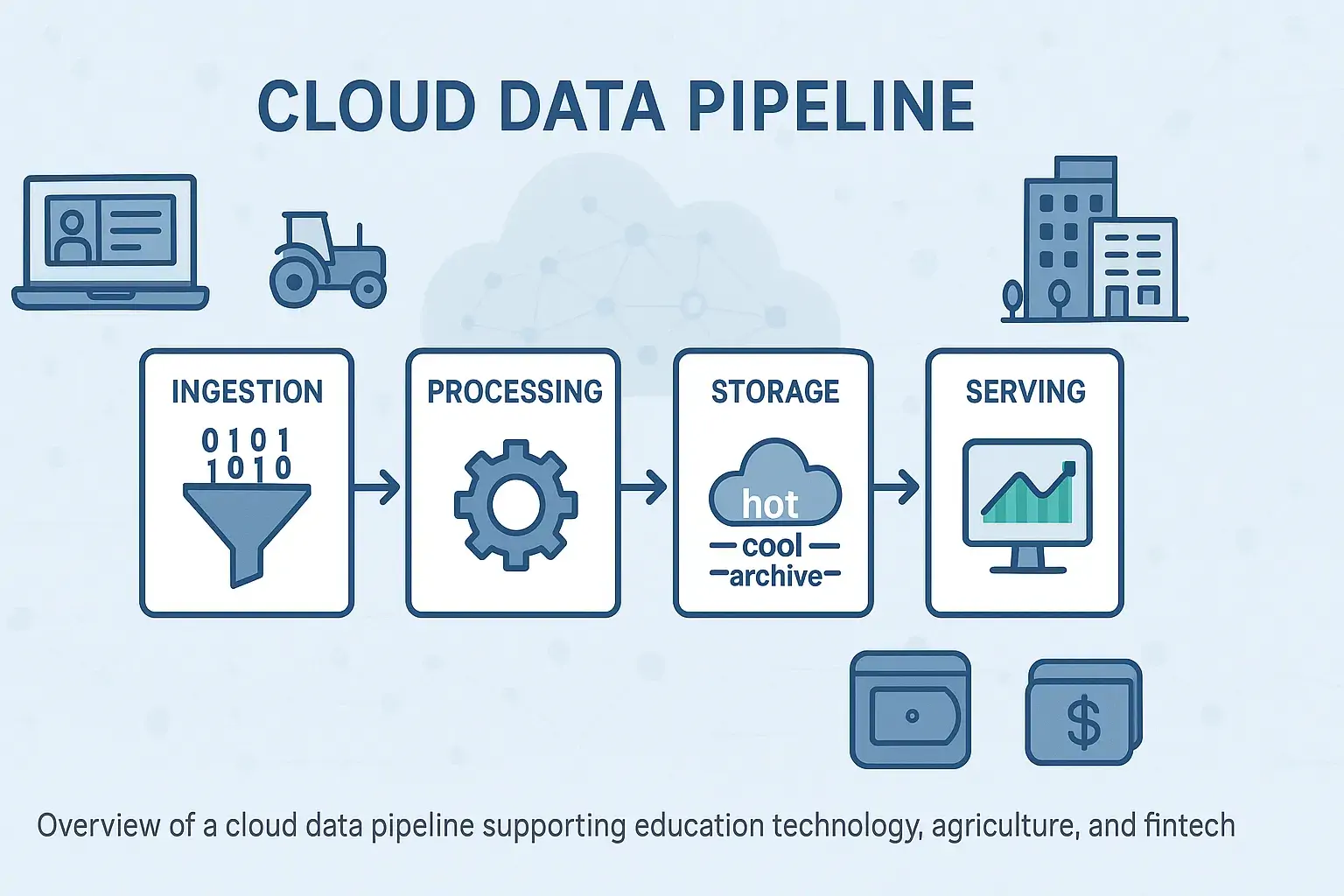

In today’s data-driven world, cloud data pipelines are the backbone of efficient, scalable, and innovative systems across industries. From education technology solutions powering learning management systems and digital classroom tools to IoT in agriculture, enabling crop monitoring solutions and livestock tracking technology, optimized data pipelines ensure seamless data flow while minimizing costs. By leveraging AI-powered automation, such as AI Agents development and machine learning solutions, organizations can process vast amounts of data with precision, whether for supply chain management, digital lending/cash-flow management, or smart building management systems in Vietnam PropTech. However, without careful design, these pipelines can lead to spiraling costs due to inefficient resource usage. This document explores strategies to optimize cloud data pipelines for cost efficiency, offering actionable insights for industries like fintech development services, real estate management software, and cloud-based veterinary clinic management, ensuring performance, scalability, and sustainability.

Why Cost Optimization Matters in Cloud Data

Cloud platforms such as AWS and Azure offer flexibility and scalability; however, without proper controls, costs can escalate rapidly. For data engineers managing complex pipelines, it’s essential to not only ensure performance and reliability but also cost efficiency. By incorporating AI-powered automation, such as AI Agents solutions for enterprise, pipelines can dynamically adjust to workload demands, reducing waste. This is particularly relevant for industries like education technology solutions, where enterprise e-learning systems and self-learning platforms rely on efficient data pipelines to deliver e-learning software at scale.

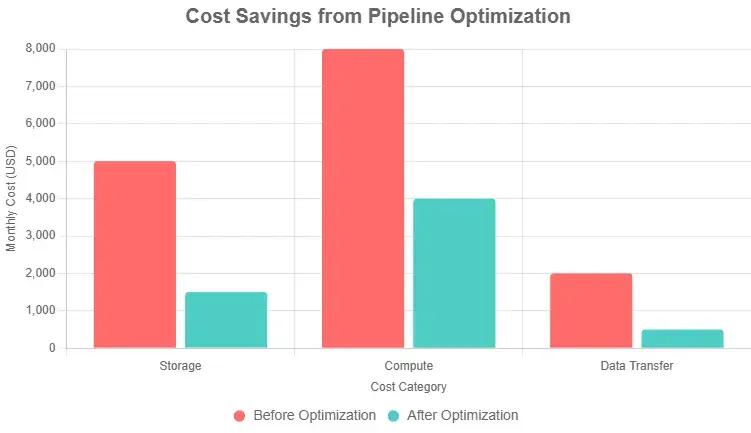

Optimizing a cloud data pipeline reduces unnecessary compute, storage, and data transfer charges while improving system responsiveness. When pipelines are lean, businesses save money, engineering teams work faster, and cloud ROI improves significantly. For instance, smart building management systems in digital transformation in real estate or supply chain management in logistics benefit from streamlined data pipelines to support real-time operations.

Key Benefits:

Lower operational costs: Cut unused compute/storage spending.

Faster processing: Well-tuned pipelines reduce execution time, critical for real-time building monitoring or shipment tracking.

Improved scalability: Allocate resources where they matter, such as in smart warehouse solutions or e-commerce solutions.

Better visibility: Cost-aware designs support proactive monitoring, like real estate project progress tracking or cattle health monitoring.

Sustainable operations: Greener computing through smarter usage, as seen in IoT in agriculture for hydroponics systems or smart irrigation.

What Makes a Pipeline Cost-Efficient

Cloud data pipelines typically include data ingestion, transformation, storage, and serving layers. A cost-efficient pipeline ensures each layer runs at minimal resource usage without sacrificing performance or accuracy. For example, AI in education can optimize data pipelines for e-learning software, while AI demand forecasting in logistics enhances last-mile delivery efficiency.

Key Areas of Cost Waste

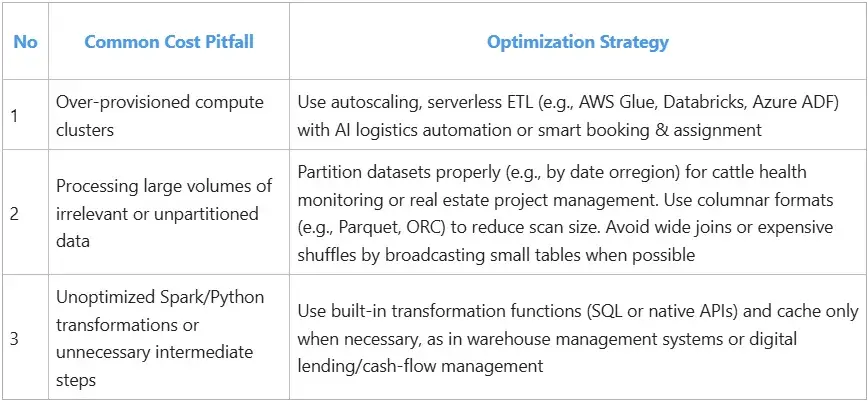

Understanding where costs typically accrue is the first step towards optimization. Key areas often prone to cost waste include:

Data Ingestion

Data Processing

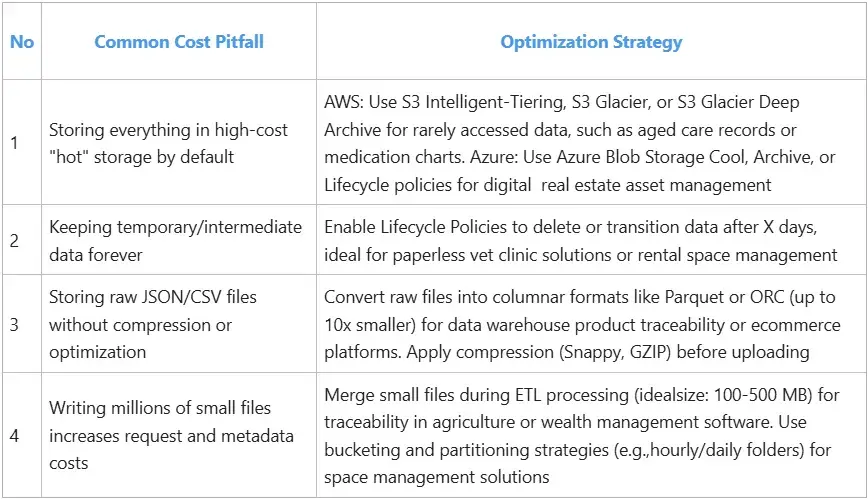

Data Storage

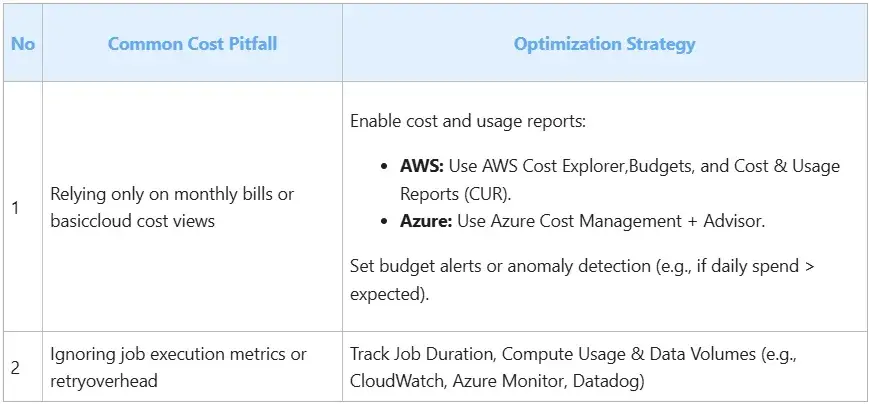

Monitoring

Why it works: Early detection = faster reaction = lower bills. For example, AI-powered digital signage or centralized advertising content management can integrate cost monitoring to optimize marketing spend.

Benefits:

Create granular budgets per service/team, such as strata management or HOA/homeowners association.

Use anomaly detection or alerting when cost exceeds thresholds, as in livestock tracking technology or pest detection.

Visualize spend trends across services (e.g., storage vs compute) for smart building management or digital wealth management.

5 Key Strategies to Optimize Your Pipeline

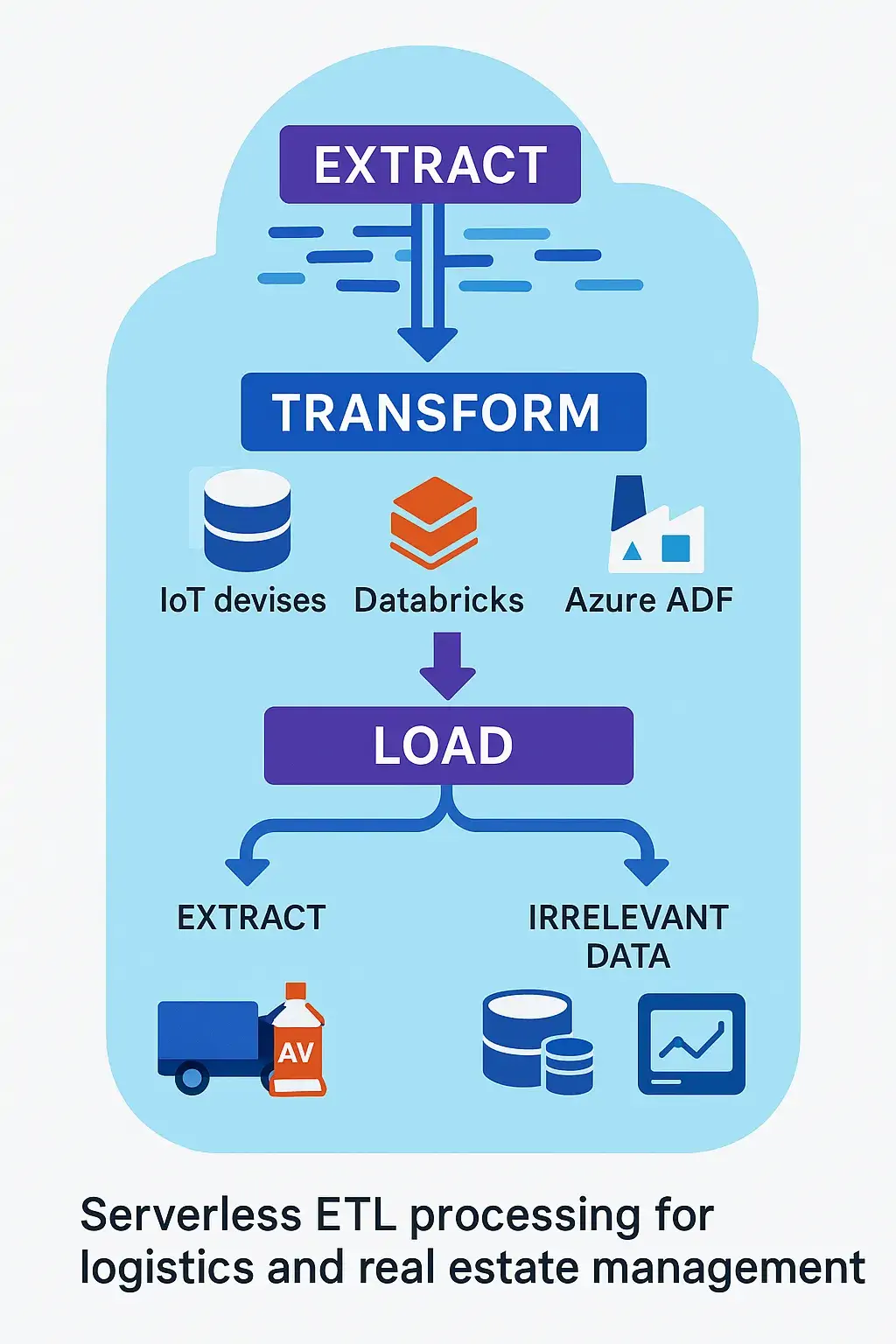

Choose Serverless for ETL When Possible

- Tools:

- AWS: Glue, Lambda, Step Functions

- Azure: Data Factory, Azure Functions, Databricks

- Why it works:

- Serverless ETL services auto-scale to workload, so you don’t pay for idle compute.

- No infrastructure management → lower DevOps/admin cost.

- Billing is per DPU-second, activity run time, and data movement volume.

- Benefits:

- Lower idle cost compared to always-on clusters

- Auto-scalable and reduced admin overhead

Adopt Tiered Storage

- Tools:

- AWS: S3 Standard / S3 Intelligent-Tiering / S3 Glacier

- Azure: Blob Storage Hot / Cool / Archive tiers, Data Lake Gen2 Lifecycle Policies

- Why it works:

- Not all data needs instant access. Old logs, historical reports, and archived datasets can live in low-cost cold storage.

- Cloud platforms provide automatic tier transitions via lifecycle policies.

- Benefits:

- Storage cost per GB drops 10x–20x when moving from standard to archival.

- Intelligent-Tiering on S3 saves cost without affecting performance for active data.

Right-Size Compute Resources

- Tools:

- AWS: EMR Auto-scaling, Glue DPU limits, SageMaker Processing

- Azure: Databricks auto-scale, Synapse Spark pools

- Why it works:

- Over-provisioning is a hidden cost—essentially paying for unused CPU or memory.

- Auto-scaling services adjust nodes based on current demand (e.g., Spark executors).

- Benefits:

- Pay for exactly what you use, no more.

- Reduces failed jobs due to memory overflows or throttling.

- Supports spot instances and preemptible pricing.

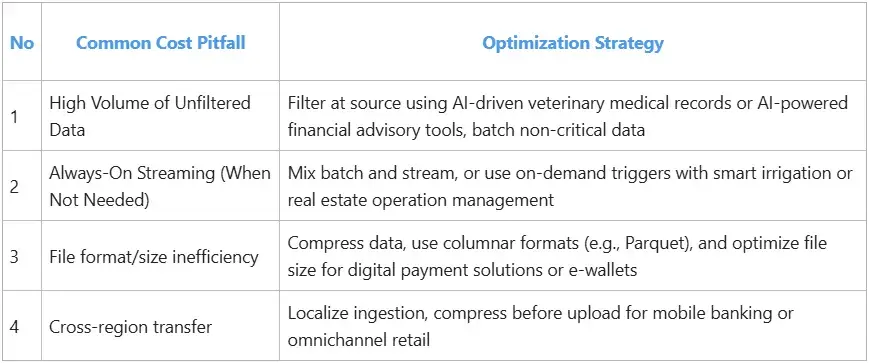

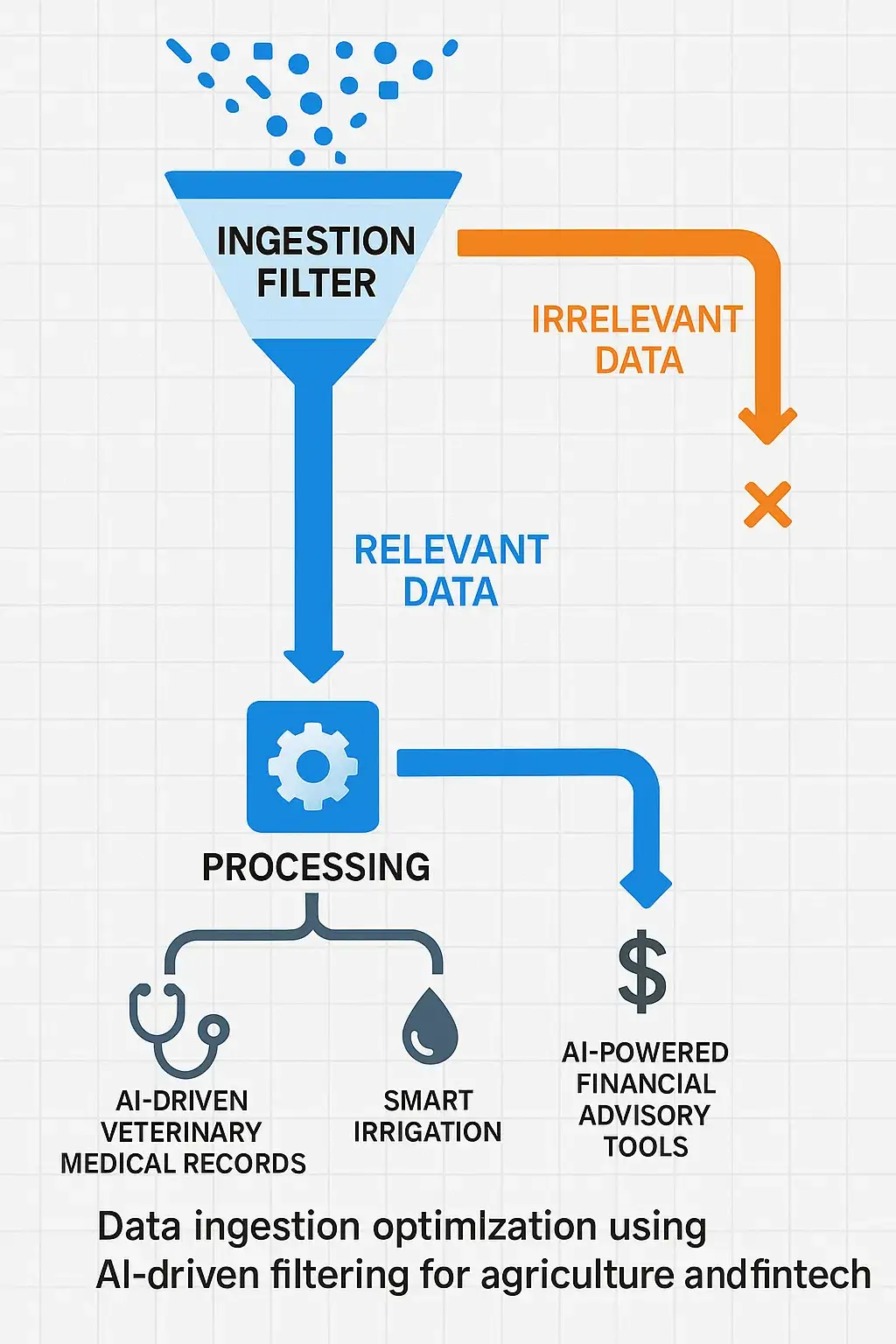

Implement Data Filtering at Ingestion

- Tools:

- AWS: Kinesis Firehose Transformations (Lambda), AWS IoT Rules Engine

- Azure: Event Grid filters, Stream Analytics

- Why it works:

- Reducing irrelevant or noisy data at ingestion reduces:

- Storage costs

- Processing costs

- Latency downstream

- Ingestion-side transformations help enforce schema validation, data quality, and field-level filtering.

- Reducing irrelevant or noisy data at ingestion reduces:

- Benefits: Reduce downstream data volume and cost.

Monitor, Alert, and Visualize Cost Metrics

- Tools:

- AWS: Cost Explorer, CloudWatch, Budgets, CUR (Cost and Usage Reports)

- Azure: Cost Management + Billing, Azure Monitor, Budget Alerts

- Other: Datadog

- Why it works: Early detection = faster reaction = lower bills.

- Benefits:

- Create granular budgets per service/team.

- Use anomaly detection or alerting when the cost exceeds thresholds.

- Visualize spend trends across services (e.g., storage vs compute).

Real-World Case Studies by TMA Solutions

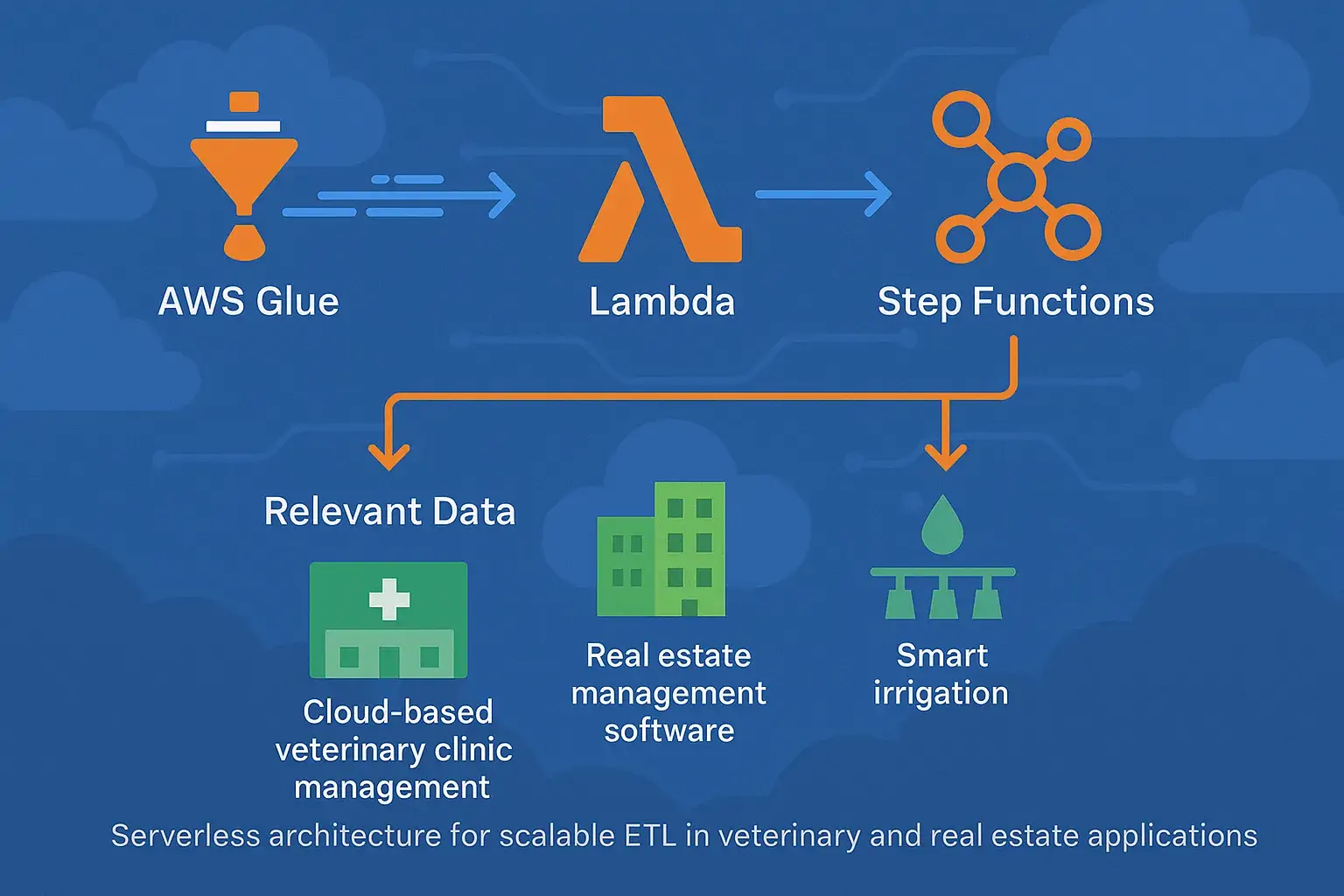

AWS Glue Jobs, Lambda Functions, Database Migration Service(DMS), Step Functions for Scalable ETL

In a recent AWS-based data modernization project, TMA Solutions transformed a legacy, manual ETL workflow into a fully automated, serverless architecture. By leveraging AWS Glue for scalable data processing, Lambda for event-driven orchestration, and Step Functions to manage ETL pipelines, the team achieved:

40% reduction in data processing costs through pay-per-use serverless infrastructure, applicable to cloud-based veterinary clinic management or digital banking services.

2x improvement in pipeline execution time, enhancing data availability for downstream analytics in AI for business management or real estate management software.

Simplified operations and reduced maintenance overhead by eliminating traditional compute provisioning, ideal for smart irrigation or building operation automation.

This implementation demonstrates how a serverless-first approach can significantly enhance both cost-efficiency and operational agility in cloud-based data platforms, such as AI-driven veterinary medical records or digital solutions for real estate.

Final Thoughts

Building cost-efficient cloud data pipelines is not only about saving money—it’s also about building smarter, faster, and more sustainable systems. Begin with small wins: implement lifecycle policies, adopt serverless where possible, and monitor cost trends weekly. For industries like fintech R&D using blockchain and Web3, PropTech SaaS, or veterinary practice management, optimized pipelines enable scalable, cost-effective solutions.

Conclusion

Cost-efficient cloud data pipelines are critical for organizations aiming to maximize value while minimizing expenses in today’s technology-driven landscape. By adopting strategies such as serverless architectures, data filtering at ingestion, and intelligent storage tiering, businesses can achieve significant cost savings and operational agility. These optimizations empower industries like e-commerce with AI chatbots for e-commerce, real estate management platforms for strata management and community management, and agriculture with traceability in agriculture and pest detection. Furthermore, integrating AI-powered automation and edge AI ensures pipelines are not only cost-effective but also future-proof, supporting innovations like NFT marketplace development for fintech, smart building management, and AI-driven veterinary medical records and practice management platforms. By prioritizing cost optimization, organizations can build sustainable, scalable systems that drive success across diverse sectors.

Take Action Now

Audit your cloud pipeline cost today using AWS Cost Explorer or Azure Cost Management.

Review pipeline design with performance and cost trade-offs in mind, leveraging AI-powered financial advisory tools or digital transformation in real estate.

Reach out to TMA Solutions to optimize or build new cost-efficient data platforms, including Web3 fintech platforms, smart building management, or cloud-based AI software for veterinarians.