Understanding the Shift from Traditional APIs to MCP

As AI agents and large language models (LLMs) become more prominent, their ability to interact with external tools and data is essential. Traditionally, this integration has relied on APIs, but in late 2024, Anthropic introduced the Model Context Protocol (MCP) — a new standard designed specifically for AI integration.

An API (Application Programming Interface) is a set of rules that allows systems to communicate. APIs are widely used, enabling services like payment processing or weather data retrieval. RESTful APIs, for example, operate over HTTP and use methods like GET or POST to interact with data.

MCP, on the other hand, is purpose-built for LLMs. It standardizes how AI agents retrieve context and perform tasks. MCP works via a host-client-server architecture using JSON-RPC 2.0. MCP servers expose three key primitives: tools (actions AI can perform), resources (read-only data), and prompt templates (suggested prompts).

A key advantage of MCP is dynamic discovery. AI agents can query an MCP server in real time to discover available functions, unlike APIs, which often require manual updates when endpoints change. MCP also ensures every server speaks the same protocol, meaning agents can interact with different services using a uniform method.

Unlike APIs, which often vary in structure and require custom integration, MCP follows a "build once, integrate many" model. Importantly, MCP and APIs are not competitors but complementary technologies. In many cases, MCP servers act as wrappers for APIs, translating MCP calls into backend API requests. This layered approach allows AI agents to benefit from MCP’s simplicity while leveraging API power underneath.

Real-World Application: TMA’s Testing Copilot Powered by MCP

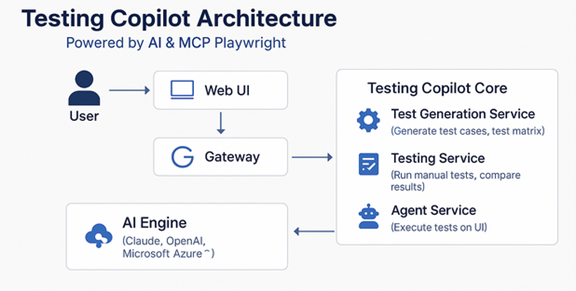

Testing Copilot is a new AI-powered tool developed by TMA to transform the way we do software testing. It is powered by MCP Playwright — a Model Context Protocol that provides browser automation capabilities using Playwright. This allows our system to understand and execute test cases step-by-step, mimicking how a real tester interacts with the web. Testing Copilot can analyze documents, generate detailed test cases, perform manual test executions, and automatically convert them into automation scripts. Our main goal is to reduce the effort spent on repetitive testing, coding, and planning tasks while accelerating the CI/CD pipeline.

We believe that AI Testers are going to replace traditional testers, especially for repetitive and rule-based tasks. As Agentic AI becomes more integrated into all phases of the software development lifecycle (SDLC), it will significantly reduce the cost of software testing and improve overall delivery speed.

TMA plans to launch a company-wide proof of concept (PoC) this summer to demonstrate how Testing Copilot can support teams across projects. This is also our first step toward a new testing approach called “Vibe Testing”, where AI takes the lead in executing tests, helping QA teams focus on more strategic and high-impact areas.