Introduction

Artificial intelligence is transforming every corner of modern life, but not always for the better. One emerging threat in the telecom sector is AI-generated voice deepfakes, which allow attackers to replicate voices with chilling accuracy. This advancement has fueled a surge in voice-based fraud, where impersonation calls are used to deceive victims and extract sensitive information.

To counter this, a new class of AI-driven cybersecurity solutions is emerging. These technologies go beyond traditional network security by analyzing speech content in real time, identifying deepfake voices, and detecting attempts to extract private or sensitive information during live calls. This article explores how AI is defending telecom networks from evolving voice-based threats.

The Growing Threat of AI Voice Cloning

Using deep learning, cybercriminals can now replicate anyone’s voice — a family member, an executive, or even a government official — with just a few seconds of audio. These synthetic voices are nearly indistinguishable from the real thing and have already been used in high-profile scams.

Notable Incidents

- Hong Kong CEO Scam (2020): $35 million stolen using an AI-cloned voice of the CEO.

- U.S. Bank Fraud (2020): $1 million fraud involving a fake executive voice.

- Family Emergency Scam (2022): AI mimicked a daughter’s voice to request bail money from her mother.

- U.S. Election Misinformation (2024): Deepfakes impersonated politicians to manipulate voter sentiment.

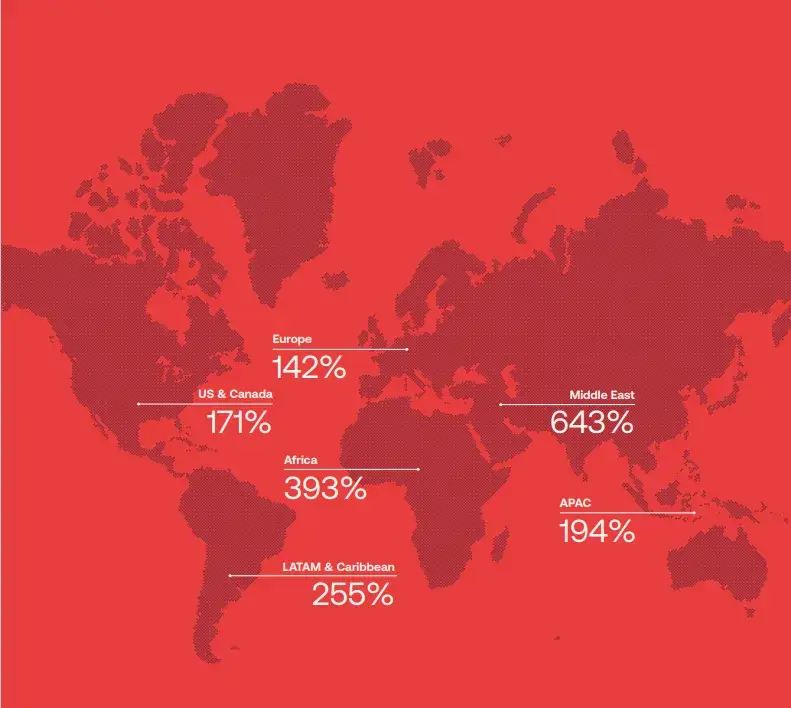

- A McAfee survey found that 77% of victims targeted by AI voice scams reported financial losses — a sign that these attacks are not just theoretical but actively undermining public trust.

Why Traditional Defenses Are Not Enough

Telecom systems are traditionally optimized to monitor call metadata, such as source and destination, not the voice content itself. This leaves networks blind to whether a voice is synthetically generated or attempting social engineering.

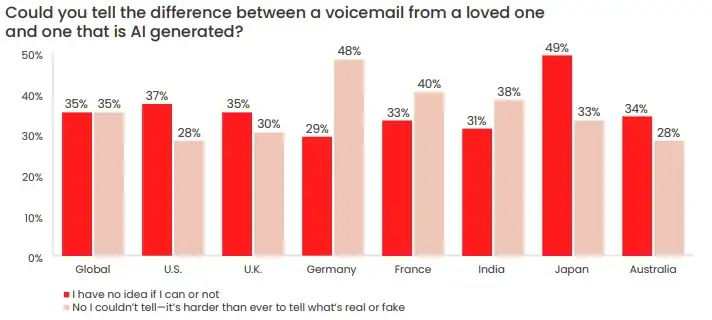

Caller ID spoofing, social cues, and phishing-resistant measures all fall short when attackers can sound exactly like the person you trust. Even humans cannot reliably distinguish real from fake voices, let alone legacy systems designed long before AI cloning became viable.

Research by McAfee has found that voice-cloning tools are capable of replicating how a person speaks with up to 95% accuracy, so telling the difference between real and fake certainly isn’t easy. In fact, 70% of people said they were either unsure if they would be able to tell (35%) or believe they wouldn’t be able to (35%)

AI-Powered Defense: Core Technologies

To defend against these sophisticated threats, AI researchers and developers have introduced cutting-edge technologies focused on real-time voice analysis, biometric speaker verification, and now - conversational content analysis.

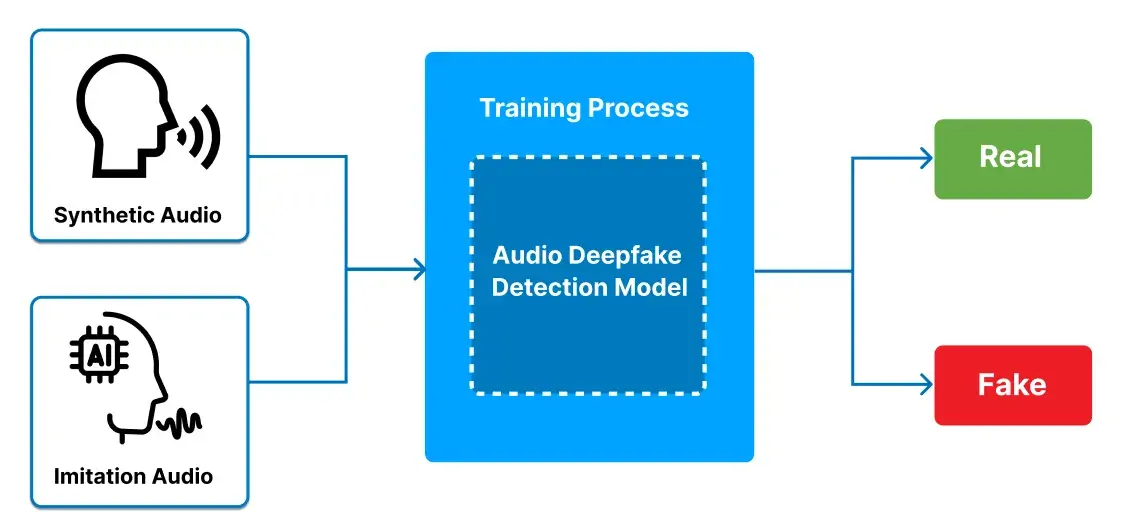

Voice Deepfake Detection

At the heart of voice security is the ability to detect when a voice has been artificially generated. AI models use self-supervised learning techniques to distinguish real human speech from synthetic audio.

- Extracts key features from spoken audio to understand and process speech.

- Detects signs of voice manipulation to identify potential deepfakes.

- Improves understanding of speech by analyzing the context within audio conversations.

These systems analyze acoustic signals in real time to flag and isolate deepfakes during ongoing conversations.

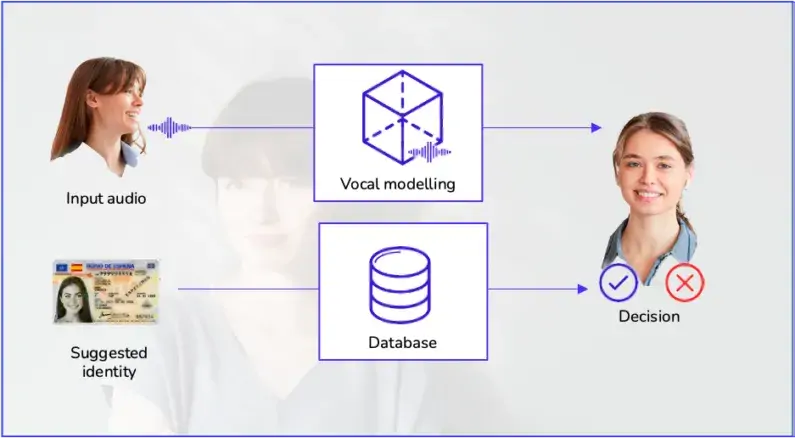

Speaker Verification via Voice Biometrics

Even when a voice sounds real, it may not belong to the right person. AI-based voice authentication systems create and compare voiceprints to verify identity.

- Captures unique voice patterns based on how a person speaks.

- Identifies who is speaking with high accuracy.

- Continuously compares live calls with known voice profiles to verify authenticity in real time.

This adds a biometric layer of identity assurance, particularly vital for executive or high-risk communication.

Sensitive Information Detection

While detecting voice impersonation is critical, an equally important layer is monitoring what is being said, especially when it comes to personal or sensitive data.

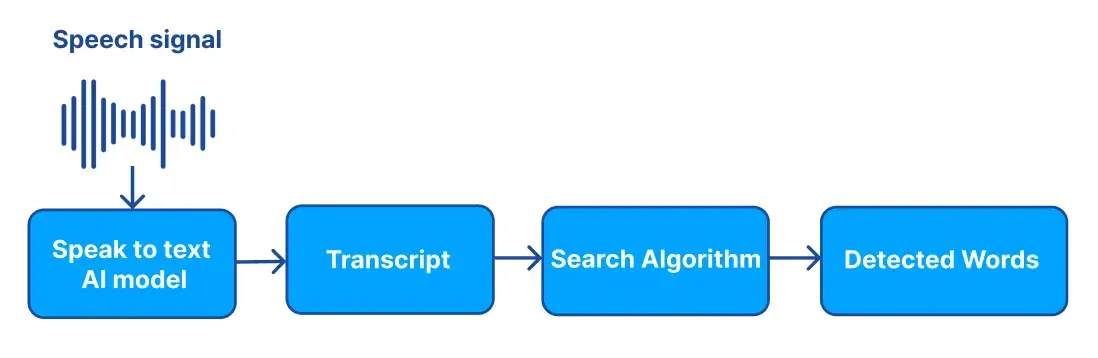

This capability uses a high-performance speech-to-text engine to transcribe conversations in real time. The resulting transcripts are then scanned using a multi-modal search framework to detect risks such as:

- Disclosure of personal identification numbers

- Bank account or payment details

- Location, address, or contact sharing

- Attempts to manipulate or deceive the speaker

The system breaks down conversations into meaningful segments to enable faster and more accurate searching. It thoroughly scans for high-risk words or phrases that may indicate potential issues. By combining both meaning-based analysis and keyword detection, it ensures a balanced and reliable approach to identifying important or risky content.

If any risk pattern is detected, the system can warn the user, flag the call for review, or terminate the session, depending on the security policy.

This advancement empowers organizations and users alike to proactively prevent data exploitation, not just react to it after the fact.

Telecom-Ready Implementation

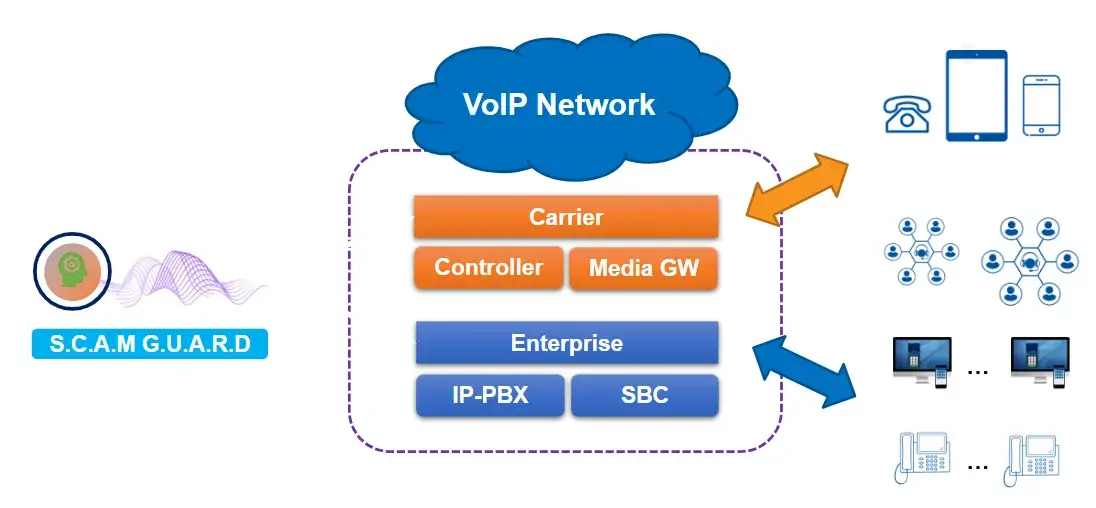

These AI models are designed to operate seamlessly in modern telecom environments, especially VoIP (Voice over IP) networks. Key integration features include:

- Real-time processing of SIP/RTP streams

- Compatibility with common systems like Asterisk PBX, IP-PBX, and SBCs

- Flexible deployment across cloud, on-premise, or hybrid infrastructures

As voice threats evolve, embedding AI directly into the telecom stack ensures that security becomes a core function of communication, not an afterthought.

TMA Approach

Scamguard feature

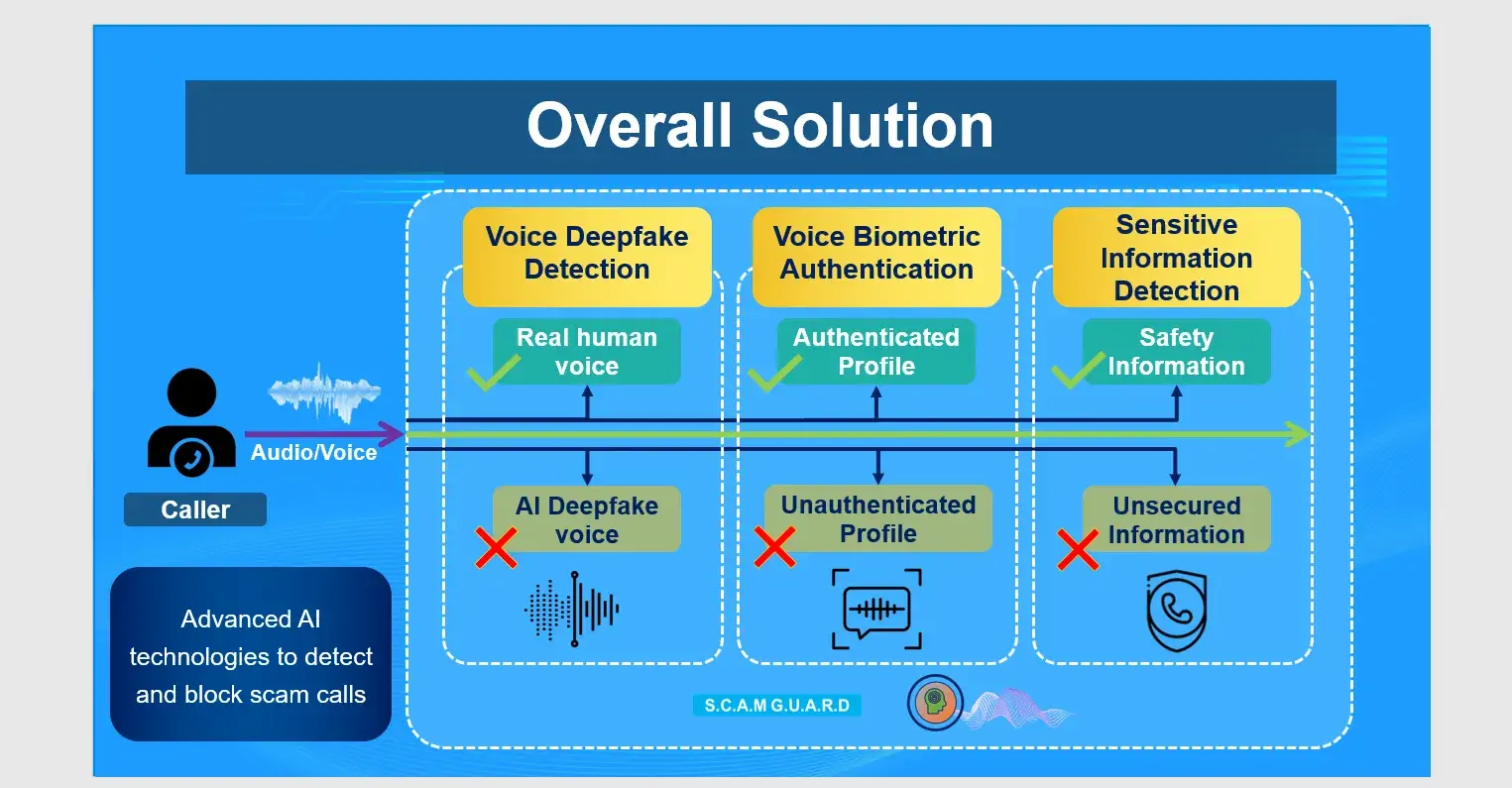

TMA has developed ScamGuard, an advanced AI-powered solution designed to protect users from voice-based fraud. It leverages cutting-edge artificial intelligence and natural language processing to enhance security and reliability, offering a strong defense against increasingly sophisticated voice-based attacks that target both individuals and organizations.

Its key features include:

- Deepfake Voice Detection: Accurately identifies and blocks AI-generated or manipulated voice recordings used in fraudulent activities.

- User Authentication: Verifies the identity of already signed-in users, particularly internal company personnel, to ensure secure communication.

- Sensitive Word Detection: Monitors conversations in real time and flags any use of suspicious or sensitive language.

ScamGuard is designed for seamless integration into any existing VoIP network infrastructure, making deployment fast and hassle-free. Whether your organization uses on-premise systems or cloud-based communication platforms, ScamGuard can be easily embedded without requiring major changes to your current setup. This flexibility ensures minimal disruption to operations while enabling immediate protection against voice-based fraud. With its compatibility across diverse VoIP environments, ScamGuard empowers businesses to enhance security without compromising performance or user experience.

Benefits of Deploying ScamGuard

- Enhanced Security and Fraud Prevention: ScamGuard uses advanced AI and NLP to detect and block deepfake voice recordings and fraudulent activities in real time, significantly reducing the risk of financial losses.

- Seamless User Experience: With automated user authentication running in the background, ScamGuard ensures secure communication without disrupting user or employee workflows. Real-time sensitive word detection provides instant alerts for suspicious activity, enhancing user trust and confidence.

- Scalable and Easy Integration: ScamGuard integrates effortlessly with existing VoIP systems and call center infrastructures, minimizing deployment costs and complexity.

- Regulatory Compliance and Reputation Protection: By preventing unauthorized access and protecting sensitive information, ScamGuard helps organizations comply with stringent regulations like GDPR and HIPAA. Proactive fraud prevention also shields businesses from reputational damage caused by voice-based scams.

- Builds Trust with Secure and Authentic Interactions: ScamGuard ensures that all voice-based communications are authentic by verifying user identities and detecting manipulated audio. This fosters trust among employees, clients, and partners, strengthening relationships and enhancing organizational credibility.

- Automation Saves Time and Resources: By automating fraud detection and user authentication, ScamGuard reduces the need for manual monitoring and intervention. This streamlines security processes, allowing organizations to allocate resources more efficiently and focus on core operations.

Conclusion

AI voice cloning and real-time fraud tactics are no longer futuristic threats — they are today’s reality. From identity theft to misinformation campaigns, the damage caused by synthetic voices is significant and growing.

But with the same sophistication that enables these attacks, AI also provides the tools to fight back. By combining deepfake detection, speaker authentication, and sensitive information monitoring, organizations can transform their voice networks from vulnerable entry points into secure communication channels.

As telecom continues its evolution, AI will be the defining factor in securing the most human element of all: our voices.