Agent2Agent (A2A) protocol Introduction

Overview and objective

The Agent2Agent (A2A) protocol is a new open-source standard initiated by Google with the mission to enable artificial intelligence agents (AI agents) to communicate, securely exchange information, and act collaboratively across different platforms, applications, frameworks, and enterprise vendors. The advent of A2A addresses one of the most serious challenges for implementing AI in the enterprise: the lack of interoperability between agents built on different technologies. The current state of siloed AI systems has significantly limited the potential to automate complex processes.

The main goal of A2A is to foster a multi-agent ecosystem where agents can collaborate to automate complex enterprise workflows. This is expected to increase autonomy, improve productivity, and reduce long-term integration costs. In essence, A2A provides a “common language” allowing agents to communicate effectively regardless of the framework or vendor they are built on.

The emphasis on A2A as an “open source standard” but initiated and promoted by a major vendor (Google) suggests a strategic move to establish a de facto standard in the nascent agent ecosystem. While openness encourages widespread adoption; Google’s central role (based on internal experience scaling agentic systems) can still influence the direction of the protocol’s development and potentially create dependencies. The long-term success of A2A will depend on truly effective community governance versus control from a single vendor, even if Google has established clear contribution paths. In other words, Google is trying to make A2A the default or most popular choice in the market, even if it is not yet a formally recognized standard by an independent standards organization.

protocol_2025-05-05-03-22-04-227.webp)

Development context

The A2A protocol was developed based on Google's practical experience in scaling internal agentic systems and addressing the challenges identified when deploying large-scale multi-agent systems for clients. This shows that A2A is not just theoretical but also rooted in practical needs and operational experience.

A2A was announced on April 9, 20251, with initial support from over 50 technology partners (such as Atlassian, Box, Salesforce, SAP, ServiceNow, LangChain) and leading service providers (such as Accenture, Deloitte). This broad support demonstrates significant market interest and real-world demand for such a standard, lending credibility and hinting at the potential for widespread adoption. Partners emphasize the value of A2A in enabling more diverse authorization and development at scale.

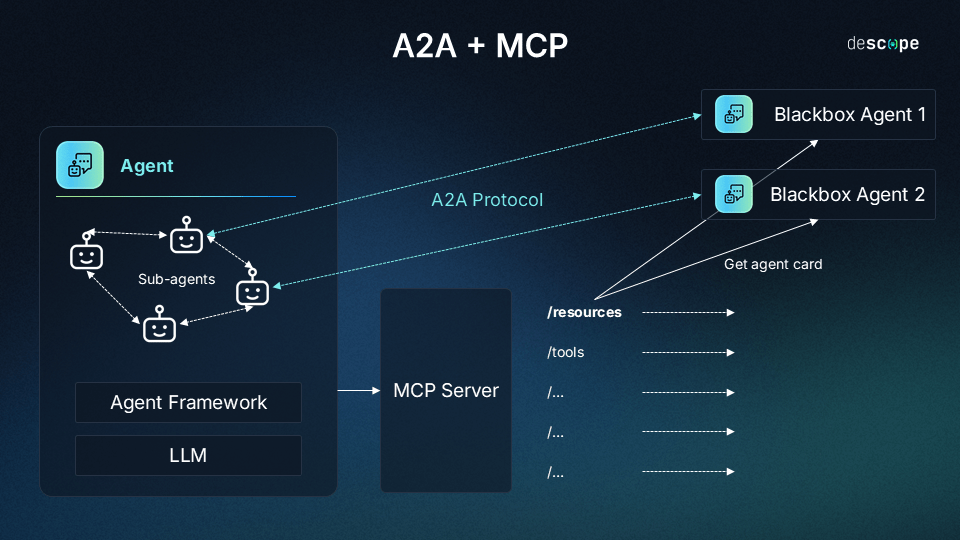

A key element in the development landscape is A2A’s position relative to Anthropic’s Model Context Protocol (MCP). A2A is positioned as complementary to MCP. While MCP focuses on agent-to-tool/resource communication, A2A focuses on agent-to-agent communication. A2A allows agents to communicate “as agents (or users) rather than tools”, where tools typically have structured input/output (I/O) and behavior, and agents are more autonomous and can tackle new tasks. However, there are also some libraries and real-world products that support both protocols.

The timing of the announcement of A2A, described as “could not be more timely, following the rapid adoption of MCP”, coupled with its positioning as complementary to MCP, suggests a strategic move by Google. Google appears to be aiming to define an inter-agent communication layer, potentially becoming the central coordination standard, while acknowledging the role of MCP in interactions with tools. This approach creates a layered architecture for agentic systems, where A2A handles high-level communication between agents and MCP handles lower-level interactions with resources. This could accelerate the development of complex multi-agent systems, but could also introduce complexity in managing interactions across both protocols.

Technical architecture of A2A protocol

Client-remote communication model

The core architecture of A2A is based on a communication model between a "client" agent and a "remote" agent. The client agent is responsible for formulating and communicating tasks, while the remote agent is responsible for executing those tasks to provide information or perform the required action. The remote agent is often viewed as a "black box" meaning that the client agent does not need to know the details of how the remote agent is implemented internally.

The main architectural components include:

- A2A Client: An application or other agent that initiates A2A requests and consumes services provided by the A2A Server.

- A2A Server: An agent that implements an HTTP endpoint that complies with the A2A protocol methods. It receives requests from the A2A Client and manages task execution through the remote agent it represents.

- Agent Card: A public metadata file that describes an agent's capabilities, skills, endpoint URLs, and authentication requirements. The client uses this information to discover and connect to appropriate remote agents.

Web standards (HTTP, SSE, JSON-RPC)

A key design decision for A2A was to build on popular and widely established web standards, including HTTP(S), Server-Sent Events (SSE), and JSON-RPC 2.0.1 Leveraging these standards is intended to lower the barrier to adoption and simplify the integration of A2A into an enterprise’s existing infrastructure and technology stacks. The protocol uses familiar web technologies.

Specifically, communication between the client and server uses JSON-RPC 2.0 messages transmitted over HTTP(S). For long-running tasks, A2A supports Server-Sent Events (SSE) so that the server can push real-time status updates to the client.

Relying on existing standards has obvious benefits in terms of integration speed and familiarity for developers. However, it also means that A2A inherits the limitations or potential complexities of those underlying protocols. For example, the request/response nature of HTTP may not be well suited to complex, persistent agent interactions, and managing persistent SSE connections at scale can be challenging. The choice of JSON-RPC 5 indicates a preference for structured procedure calls between agents, which may be more intuitive for procedural tasks but are potentially less flexible than purely RESTful approaches for resource-oriented interactions.

Communication flow and main components

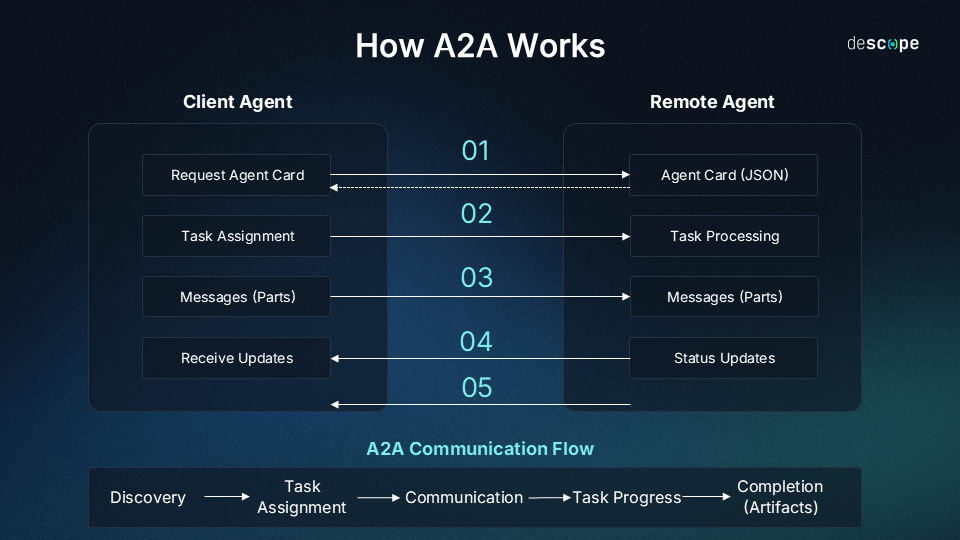

The typical communication flow in A2A follows these 2 steps:

- Discovery: The client fetches the remote agent's Agent Card (typically from a well-known URL like /.well-known/agent.json) to learn about that agent's capabilities, endpoints, and authentication requirements.

- Initiation: The client sends a task request to the A2A Server URL. This request typically uses the tasks/send method (for regular tasks) or tasks/sendSubscribe (if the client wants to receive streaming updates). The request contains the initial message (e.g., the user query) and a unique task ID.

- Processing: The A2A Server receives the request, creates a new Task, and starts managing the task execution through the remote agent. The Server is responsible for monitoring the Task's lifecycle.

- Communication/Updates: During task processing, the client and server can exchange Messages containing Parts of content. For long-running tasks and if the client has subscribed, the server will send status updates (e.g. TaskStatusUpdateEvent, TaskArtifactUpdateEvent) via SSE. Additionally, the server can send Push Notifications to a webhook provided by the client.

- Completion: The task ends in a final state (e.g. completed, failed, canceled). If successful, the remote agent can return Artifacts representing the results of the task.

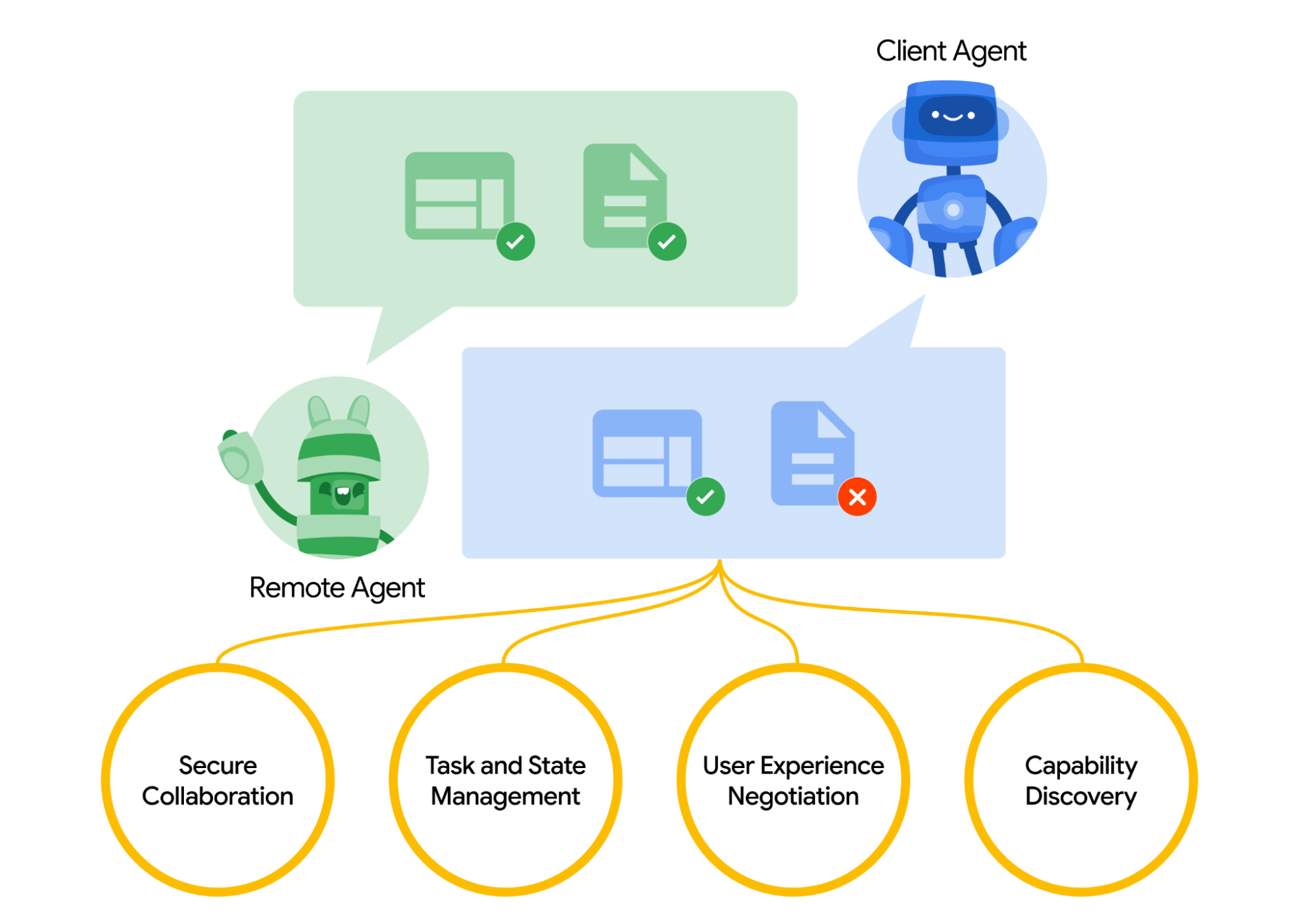

This architecture and communication flow supports several key interoperability capabilities:

- Capability Discovery: Find the right agent.

- Task Management: Define, execute, and track tasks.

- Agent Collaboration: Exchange information and context via messaging.

- User Experience Negotiation: Agree on display formats.

Core concepts in A2A protocol

Agent card and capability discovery

Agent Cards are a foundational component for discovery in the A2A ecosystem. They are public metadata files, typically in JSON format, and are provided at a standardized path (e.g. /.well-known/agent.json) on the agent server. Agent Cards contain important information describing the agent, including: name, description, version, capabilities or skills the agent provides, endpoint URLs where the agent can be accessed, and authentication requirements needed to interact.

The main function of Agent Cards is to allow client agents or other systems to discover available remote agents and understand their capabilities and how to interact with them. It functions similarly to the API description of a service or the DNS mechanism in the internet world.

Task: Lifecycle and management

Tasks are the central unit of work or conversation in an A2A protocol, initiated by a client agent. Each Task represents a specific goal or query that needs to be processed by the remote agent.

Each Task is assigned a unique identifier (Task ID) and goes through a well-defined lifecycle with specific states. These states include submitted, working, input-required, and final states such as completed, failed, or canceled.

Managing Tasks along this lifecycle is important, especially for long-running tasks, as it allows for progress tracking and keeps both the client and remote agent in sync about the current state of the task. The final output of a Task is called an “Artifact.”

Message, part, and artifact

Communication within a Task occurs through the exchange of Messages between the client and the remote agent. These messages are role-specific: role: "user" for messages from the client and role: "agent" for messages from the remote agent. Messages not only contain the initial request or final result, but also allow for the exchange of context, partial responses, requests for clarification, or instructions from the user, facilitating richer collaboration than a single request/response.

Each Message (and Artifact) is composed of one or more Parts. A Part is the basic unit of content, and each Part has a clearly specified content type (e.g., a MIME type). The defined Part types include TextPart (for text content), FilePart (for files, which can contain inline binary data or a URI pointing to a file), and DataPart (for structured JSON data, e.g., form data).

This Part structure is the foundation for User Experience Negotiation. Clients and remote agents can rely on supported Part types to agree on the format and presentation of information that best suits the client’s user interface (UI) capabilities (e.g., displaying plain text, embedding iframes, playing video, displaying web forms).

Artifacts represent the output products produced by the remote agent during the execution of a Task. These can be generated files (e.g., reports, images), structured data, or the final result of a query. Like Messages, Artifacts also contain one or more Parts that make up the output content..

Streaming and push notifications

To address the challenge of tasks that cannot be completed immediately, A2A provides two asynchronous state update mechanisms:

- Streaming via SSE: Servers that support streaming capabilities can accept requests via the tasks/sendSubscribe method instead of tasks/send. When a client uses this method, the server keeps the connection open and sends real-time progress updates in the form of Server-Sent Events (SSE). These events are typically TaskStatusUpdateEvent (task status update) or TaskArtifactUpdateEvent (created artifact update).

- Push Notifications via Webhooks: Servers that support pushNotifications can proactively send Task status updates to a client-provided webhook URL. The client configures this webhook URL via the tasks/pushNotification/set method. This mechanism provides an alternative to maintaining a persistent SSE connection, suitable for clients that cannot or do not want to keep the connection open. Sample implementations show that the use of webhooks can be coupled with an authentication mechanism (e.g., JWK).

Providing both SSE and webhooks reflects a pragmatic design that recognizes that no single update mechanism fits all use cases for complex, long-running agent interactions in diverse enterprise environments. SSE is suited for clients with active connectivity, while webhooks serve scenarios where clients may not maintain persistent connectivity or prefer event-based updates. This architectural choice directly addresses the practical challenges of non-instantaneous enterprise workflows, providing flexibility in implementation but potentially increasing protocol complexity.

Key features and benefits

Agentic and multimodal interaction capabilities

One of the core strengths of A2A is the ability to allow agents to collaborate using their “natural, unstructured methods” rather than being limited to predefined “tools” with rigid I/O. This paves the way for truly multi-agent scenarios where agents can coordinate more flexibly.

A2A is designed to be “modality agnostic” meaning it is not limited to text-based communication alone. The protocol supports a variety of media formats, including audio and video streams, images, files, and structured data. This is accomplished through the aforementioned Part mechanism, where each piece of content can define its data type.

Secure and enterprise integration

A2A is designed with the goal of being “secure by default”, supporting enterprise-grade authentication and authorization mechanisms. The protocol is compatible with OpenAPI authentication schemes, and the Agent Card is where agents declare their authentication requirements.

The protocol is built to address integration challenges in enterprise environments2 and support core enterprise requirements such as secure collaboration. The implementation libraries also emphasize production readiness. Some sources mention the use of encryption and authentication methods, as well as Role-Based Access Control (RBAC).

Despite claims of “enterprise-grade security”, the specifics in Google’s official documentation (blog, GitHub README) refer primarily to support for OpenAPI authentication schemes and declarations in the Agent Card. The security of the communication channel relies primarily on the use of HTTPS. Further details on encryption or RBAC appear in secondary sources. This suggests that A2A enables secure implementations by supporting standard authentication methods and relying on HTTPS, but the actual level of security depends heavily on how it is implemented and configured by developers and organizations. The protocol does not appear to introduce new layers of security beyond specifying how authentication is declared and handled using existing schemes. Enterprise security readiness requires careful implementation following best practices.

Long-running tasks support

A core design principle of A2A is the ability to support tasks that can span hours or even days, potentially including human-in-the-loop involvement. For such complex workflows, A2A provides mechanisms to provide real-time feedback, notifications, and status updates throughout the process, via SSE streaming or push notifications.

Integration and scalability

As mentioned, A2A builds on existing web standards (HTTP, SSE, JSON-RPC), which simplifies integration into existing enterprise IT stacks.

The open source nature of A2A, with a public repository on GitHub (google/A2A) and clear contribution paths, encourages community engagement and ongoing development of the protocol. The repository provides a specification, common libraries (Python, JS/TS), agent implementation examples for many popular frameworks (such as LangGraph, CrewAI, Google ADK, Genkit, LlamaIndex, Marvin, Semantic Kernel), and demo applications. The presence of third-party libraries also suggests potential for integration and extension. The protocol is designed to be flexible and extensible.

Table 1: Key Features of A2A

|

Feature |

Description |

Key Benefit |

|

Agentic Interoperability |

Enable agents to communicate and collaborate across framework/vendor boundaries. |

Break down silos, create multi-agent ecosystems. |

|

Build on Existing Standards |

Use HTTP, SSE, JSON-RPC. |

Easily integrate into existing IT infrastructure. |

|

Secure by Default |

Support enterprise-grade authentication/authorization (OpenAPI compatible). |

Ensure secure agent communication in the enterprise. |

|

Support for Long-Running Tasks |

Handle tasks spanning hours/days with status updates (SSE/Push). |

Automate complex, asynchronous processes. |

|

Modality Agnostic |

Support multiple data formats (text, audio, video, files, JSON). |

Flexibly handle diverse types of information. |

|

Capability Discovery |

Agents advertise their capabilities via Agent Cards (JSON). |

Allow clients to find and select the right agent. |

|

Task Management |

Define and track Task lifecycles and status. |

Ensure structured and synchronous task processing. |

|

Agent Collaboration |

Exchange Messages containing context, instructions, artifacts. |

Enable more complex, two-way interactions. |

|

User Experience Negotiation |

Unify display formats via Parts and content types |

Ensure information presentation is consistent with the client UI. |

|

Open Source |

Protocol and source code available on GitHub, contributions encouraged. | Drive adoption, transparency, and community development. |

Real-world examples and use cases

Automated recruitment process

An example often used to illustrate the power of A2A is the automation of the hiring process. In this scenario:

- A hiring manager uses a unified interface (e.g. Agentspace) to assign a task to their primary agent (acting as an A2A client). The task is to find software engineering candidates that match a specific job description, location, and skill set.

- This primary agent then uses the A2A protocol to interact with other specialized agents (acting as A2A remotes). These specialized agents may be provided by different vendors or run on different systems.

- A2A interactions include:

- The primary agent requests a sourcing agent to search for potential candidate profiles from multiple sources.

- After receiving the list of recommendations, the manager can direct the primary agent to request a scheduling agent to schedule interviews at appropriate times.

- A follow-up agent can be used to send interview details and status updates to the candidate.

- The process can even extend to requesting another agent to assist with background checks.

This example illustrates how A2A enables seamless collaboration between different specialized agents to streamline a complex, multi-step enterprise workflow, all within a single user interface.

Other potential applications

In addition, A2A-related implementation samples and documentation also suggest many other potential use cases:

- Specific tasks: Sample agents provided in the GitHub repository demonstrate applications such as currency conversion (using LangGraph), image generation (using CrewAI and the Gemini API), expense report processing with forms (using Google ADK), movie information retrieval via API and source code generation (using Genkit) , and file chat (using LlamaIndex).

- Enterprise integration and orchestration: A2A can be used to coordinate the activities of multiple AI agents across different departments, creating complex business workflows with conditional branches. It can also serve as an interface to integrate legacy systems into the modern AI ecosystem.

- Enhancing existing applications: For example, a chat application can integrate with a sentiment analysis agent through A2A to assess user emotions in real time without having to build that functionality in-house. A news aggregator system can use A2A to connect agents that handle different steps in the process.

- User interaction: Conceptual examples such as planning a birthday party also illustrate how agents can collaborate to accomplish user-defined goals.

The variety of these examples, from simple API calls to complex workflows and creative tasks, shows that A2A is positioned as a general-purpose protocol for many different types of agent interactions, not limited to specific domains. However, it should be noted that the specific examples that have been implemented in the source code repository (such as currency conversion, image generation) are currently relatively simple and self-contained. The realization of complex, multi-provider workflows (such as the recruitment example) depends heavily on the availability and A2A compliance of a diverse ecosystem of specialized agents. The protocol enables this vision, but its realization depends on the development and maturity of the agent ecosystem around A2A, which is still in its early stages.

Ecosystem, community and future

Open source community and partner contributions

The launch of A2A was marked by strong support from over 50 technology partners and service providers. This broad initial participation demonstrates significant industry interest and awareness of the need to address agent interactions. Partners such as Atlassian have expressed confidence in A2A’s potential to “help agents discover, coordinate, and reason successfully with each other to enable richer forms of authorization and collaboration at scale.”

Google has released A2A as open source, with an official repository on GitHub (google/A2A). The repository provides documentation, a technical specification (in the form of JSON Schema), common libraries for Python and JavaScript/TypeScript, sample agents that integrate with various frameworks, and demo applications.

Google is committed to building the protocol collaboratively with partners and the open source community, establishing clear contribution paths, and actively seeking feedback. However, some observers have noted the absence of several important companies or frameworks (e.g., Anthropic, the original LlamaIndex, Pydantic AI) from the initial partner list, raising questions about its universal applicability or the risk of future standard fragmentation. The possibility of version fragmentation as the protocol evolves is also a potential risk.

Relationship with MCP protocol

The relationship between A2A and the Anthropic Model Context Protocol (MCP) is an important aspect that needs to be clarified. A2A is clearly positioned as a complement to MCP, not a replacement.

The two protocols address different problems but can work together synergistically:

- A2A: Focuses on Agent-to-Agent communication. It allows agents to discover each other, collaborate on tasks, coordinate actions, and communicate in natural, possibly unstructured ways.

- MCP: Focuses on Agent-to-Tool/Resource communication. It provides a standardized way for AI models to interact with external tools, data sources, and systems, often with structured inputs/outputs.

In a complex agentic system, A2A can handle high-level dialogue and coordination between agents, while MCP equips those agents with the tools (APIs, databases, etc.) needed to perform their tasks. The Google ADK (Agent Development Kit) supports MCP tools, and the A2A documentation even recommends modeling A2A agents as MCP resources. Several libraries have also been built to support both protocols.

The clear delineation and proposed synergies between A2A and MCP point to an emerging standard architecture for complex agentic systems: A2A for high-level coordination and MCP for low-level resource interactions. However, reliance on two separate (though complementary) protocols, likely driven by different major companies (Google for A2A, Anthropic for MCP), could create integration complexity and potential future standards conflicts if their scopes overlap or evolve in significantly different directions. Developers may need to implement and manage both, and the health of the ecosystem depends on their continued alignment.

Table 2: Comparison of A2A and MCP

| Aspect | A2A Protocol | Model Context Protocol | Relationship/Synergy |

| Primary Goal | Enables communication and collaboration between independent AI agents | Standardize how agents/models interact with external tools and resources | Complementary: A2A for agent-agent communication, MCP for agent-tool |

| Communication Focus | Agent <-> Agent | Agent/Model <-> Tool/Resource/API | A2A orchestration, MCP provides execution via tool |

| Key Abstraction | Task, Message, Agent Card | Tool Definition, Function Call/Response | A2A agents can be modeled as MCP resources |

| Originator | Anthropic | Two separate initiatives but positioned as compatible | |

| Interaction Style | Conversational, unstructured, task-oriented, long-term | Function call, structured I/O, tool oriented | The system can use both: A2A for the main flow, MCP for tool steps |

| Typical Use Case | Multi-agent process coordination, task delegation | Allows agents to use APIs, query databases, and run code | Build complex agents that collaborate and use tools |

Current status and development roadmap

At the time of its April 2025 launch, the A2A specification and sample source code are considered early versions and work in progress.

Google and its partners are working to make A2A a stable standard. A production-ready version, likely version 1.0, along with official software development kits (SDKs), is expected to be released by the end of 2025.

The protocol is expected to change and evolve based on community feedback. Similar to MCP, A2A adoption may take time for the community to build applications and systems based on it.

Conclusion

Google’s Agent2Agent (A2A) protocol represents a significant effort to address the interoperability challenge in the rapidly evolving AI agent ecosystem. By providing an open standard based on common web technologies, A2A promises to facilitate seamless collaboration between agents built on different platforms and by different vendors. Core features such as Agent Card capability discovery, structured task management, long-running task support, and method-agnostic design provide a solid foundation for building complex multi-agent systems and automating business processes.

Positioning A2A as complementary to MCP suggests a vision of a layered architecture for agentic applications, where A2A manages high-level communication between agents and MCP handles low-level interactions with the engine. While this approach has great potential, it also requires ongoing coordination and alignment between the standards and the relevant communities to avoid unnecessary fragmentation or complexity.

The success of A2A will depend on many factors: widespread industry adoption (beyond the initial partners), the maturity of the specification and SDK, the development of a diverse A2A-compliant agent ecosystem, and the ability to maintain a truly open and collaborative governance model. Although still in its early stages, with a production release expected by the end of 2025, A2A has the potential to reshape the way AI systems are built and interact, ushering in a new era of agent-based interoperability and automation. Developers, architects, and technology leaders should closely monitor the evolution of A2A and the ecosystem surrounding it to assess the opportunities and challenges of integrating it into their AI strategies.

Applying A2A protocol to develop AI Agents at TMA Solution

In the process of improving intelligent automation capabilities, TMA Solutions has researched and applied the A2A protocol to the Virtual Assistant system (T-VA – TMA Virtual Assistant). This protocol allows AI Agents to not only operate independently, but also exchange information, coordinate and share tasks with each other flexibly. Thanks to that, T-VA can build complex processes such as coordinating data between multiple departments, supporting multi-channel clients, or synthesizing reports from multiple sources with just one input request. Applying A2A protocol helps expand the capabilities of each individual agent, creating a multi-agent collaborative virtual assistant system, improving efficiency and accuracy in handling actual business operations.

product applies A2A protocol in developing Agents_2025-05-19-04-41-51-168.webp)